You may have heard the outlandish claim: Bill Gates is using the Covid-19 vaccine to implant microchips in everyone so he can track where they go. It’s false, of course, debunked repeatedly by journalists and fact-checking organisations.

Yet the falsehood persists online – in fact, it is one of the most popular conspiracy theories making the rounds.

In May, a Yahoo/YouGov poll found that 44 per cent of Republicans (and 19 per cent of Democrats) said they believed it.

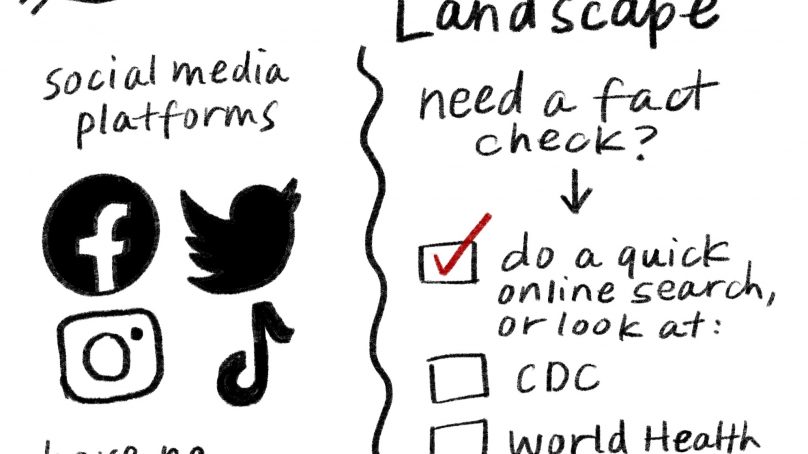

This particular example is just a small part of what the World Health Organization now calls an infodemic – an unprecedented glut of information that may be misleading or false. Misinformation – false or inaccurate information of all kinds, from honest mistakes to conspiracy theories – and its more intentional subset, disinformation, are both thriving, fuelled by a once-in-a-generation pandemic, extreme political polarisation and a brave new world of social media.

“The scale of reach that you have with social media and the speed at which it spreads is really like nothing humanity has ever seen,” says Jevin West, a disinformation researcher at the University of Washington.

One reason for this reach is that so many people are participants on social media. “Social media is the first type of mass communication that allows regular users to produce and share information,” says Ekaterina Zhuravskaya, an economist at the Paris School of Economics who co-authored an article on the political effects of the Internet and social media in the 2020 Annual Review of Economics.

Trying to stamp out online misinformation is like chasing an elusive and ever-changing quarry, researchers are learning. False tales – often intertwined with elements of truth – spread like a contagion across the Internet.

They also evolve over time, mutating into more infectious strains, fanning across social media networks via constantly changing pathways and hopping from one platform to the next.

Misinformation doesn’t simply diffuse like a drop of ink in water, says Neil Johnson, a physicist at George Washington University who studies misinformation. “It’s something different. It kind of has a life of its own.”

The Gates fiction is a case in point. On March 18, 2020, Gates mentioned in an online forum on Reddit that electronic records of individuals’ vaccine history could be a better way to keep track of who had received the Covid-19 vaccine than paper documents, which can be lost or damaged. The very next day, a website called Biohackerinfo.com posted an article claiming that Gates wanted to implant devices into people to record their vaccination history.

Another day later, a YouTube video expanded that narrative, explicitly claiming that Gates wanted to track people’s movements. That video was viewed nearly two million times. In April, former Donald Trump advisor Roger Stone repeated the conspiracy on a radio programme, which was then covered in the New York Post.

Fox News host Laura Ingraham also referred to Gates’s intent to track people in an interview with then US Attorney General William Barr.

But though it is tempting to think from examples like this that websites like Biohackerinfo.com are the ultimate sources of most online misinformation, research suggests that’s not so. Even when such websites churn out misleading or false articles, they are often pushing what people have already been posting online, says Renee DiResta, a disinformation researcher at the Stanford Internet Observatory.

Indeed, almost immediately after Gates wrote about digital certificates, Reddit users started commenting about implantable microchips, which Gates had never mentioned.

In fact, research suggests that malicious websites, bots and trolls make up a relatively small portion of the misinformation ecosystem. Instead, most misinformation emerges from regular people and the biggest purveyors and amplifiers of misinformation are a handful of human super-spreaders. For example, a study of Twitter during the 2016 United States of America presidential election found that in a sample of more than 16,000 users, six per cent of those who shared political news also shared misinformation.

But the vast majority – 80 per cent – of the misinformation came from just 0.1 per cent of users. Misinformation is amplified even more when those super-spreaders, such as media personalities and politicians like Donald Trump (until his banning by Twitter and other sites), have access to millions of people on social and traditional media.

Thanks to such super-spreaders, misinformation spreads in a way that resembles an epidemic. In a recent study, researchers analysed the rise in the number of people engaging with Covid-19-related topics on Twitter, Reddit, YouTube, Instagram and a right-leaning network called Gab.

Fitting epidemiological models to the data, they calculated R-values which, in epidemiology, represent the average number of people a sick person would infect. In this case, the R-values describe the contagiousness of Covid-19-related topics in social media platforms – and though the R-value differed depending on the platform, it was always greater than one, indicating exponential growth and, possibly, an infodemic.

Differences in how information spreads depend on features of the particular platform and its users, not on the reliability of the information itself, says Walter Quattrociocchi, a data scientist at the University of Rome.

He and his colleagues analysed posts and reactions – such as likes and comments – about content from both reliable and unreliable websites, the latter being those that show extreme bias and promote conspiracies, as determined by independent fact-checking organizations. The number of posts and reactions regarding both types of content grew at the same rate, they found.

- A Knowable magazine report