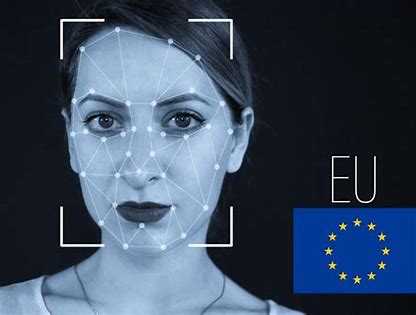

In 2019, guards on the borders of Greece, Hungary and Latvia began testing an artificial-intelligence-powered lie detector. The system, called iBorderCtrl, analysed facial movements to attempt to spot signs a person was lying to a border agent.

The trial was propelled by nearly $5 million in European Union research funding and almost 20 years of research at Manchester Metropolitan University, in the UK.

The trial sparked controversy. Polygraphs and other technologies built to detect lies from physical attributes have been widely declared unreliable by psychologists. Soon, errors were reported from iBorderCtrl, too.

Media reports indicated that its lie-prediction algorithm didn’t work, and the project’s own website acknowledged that the technology “may imply risks for fundamental human rights.”

This month, Silent Talker, a company spun out of Manchester Met that made the technology underlying iBorderCtrl, dissolved. But that’s not the end of the story. Lawyers, activists and lawmakers are pushing for a European Union law to regulate AI, which would ban systems that claim to detect human deception in migration – citing iBorderCtrl as an example of what can go wrong.

Former Silent Talker executives could not be reached for comment.

A ban on AI lie detectors at borders is one of thousands of amendments to the AI Act being considered by officials from EU nations and members of the European Parliament. The legislation is intended to protect EU citizens’ fundamental rights, like the right to live free from discrimination or to declare asylum.

It labels some use cases of AI “high-risk,” some “low-risk,” and slaps an outright ban on others. Those lobbying to change the AI Act include human rights groups, trade unions and companies like Google and Microsoft, which want the AI Act to draw a distinction between those who make general-purpose AI systems, and those who deploy them for specific uses.

Last month, advocacy groups including European Digital Rights and the Platform for International Cooperation on Undocumented Migrants called for the act to ban the use of AI polygraphs that measure things like eye movement, tone of voice, or facial expression at borders.

Statewatch, a civil liberties nonprofit, released an analysis warning that the AI Act as written would allow use of systems like iBorderCtrl, adding to Europe’s existing “publicly funded border AI ecosystem.”

The analysis calculated that over the past two decades, roughly half of the €341 million ($356 million) in funding for use of AI at the border, such as profiling migrants, went to private companies.

The use of AI lie detectors on borders effectively creates new immigration policy through technology, says Petra Molnar, associate director of the non-profit Refugee Law Lab, labelling everyone as suspicious.

“You have to prove that you are a refugee, and you’re assumed to be a liar unless proven otherwise,” she says. “That logic underpins everything. It underpins AI lie detectors and it underpins more surveillance and pushback at borders.”

Molnar, an immigration lawyer, says people often avoid eye contact with border or migration officials for innocuous reasons – such as culture, religion, or trauma – but doing so is sometimes misread as a signal a person is hiding something. Humans often struggle with cross-cultural communication or speaking to people who experienced trauma, she says, so why would people believe a machine can do better?

The first draft of the AI Act released in April 2021 listed social credit scores and real-time use of facial recognition in public places as technologies that would be banned outright. It labelled emotion recognition and AI lie detectors for border or law enforcement as high-risk, meaning deployments would have to be listed on a public registry. Molnar says that wouldn’t go far enough, and the technology should be added to the banned list.

Dragoș Tudorache, one of two rapporteurs appointed by members of the European Parliament to lead the amendment process, said lawmakers filed amendments this month, and he expects a vote on them by late 2022.

The parliament’s rapporteurs in April recommended adding predictive policing to the list of banned technologies, saying it “violates the presumption of innocence as well as human dignity,” but did not suggest adding AI border polygraphs. They also recommended categorising systems for patient triage in health care or deciding whether people get health or life insurance as high-risk.

While the European Parliament proceeds with the amendment process, the Council of the European Union will also consider amendments to the AI Act. There, officials from countries including the Netherlands and France have argued for a national security exemption to the AI Act, according to documents obtained with a freedom of information request by the European Centre for Not-for-Profit Law.

Vanja Skoric, a programme director with the organisation, says a national security exemption would create a loophole that AI systems that endanger human rights – such as AI polygraphs – could slip through and into the hands of police or border agencies.

Final measures to pass or reject the law could take place by late next year. Before members of the European Parliament filed their amendments on June 1, Tudorache said. “If we get amendments in the thousands as some people anticipate, the work to actually produce some compromise out of thousands of amendments will be gigantic.”

He now says about 3,300 amendment proposals to the AI Act were received but thinks the AI Act legislative process could wrap up by mid-2023.

Concerns that data-driven predictions can be discriminatory are not just theoretical. An algorithm deployed by the Dutch tax authority to detect potential child benefit fraud between 2013 to 2020 was found to have harmed tens of thousands of people, and led to more than 1,000 children being placed in foster care.

The flawed system used data such as whether a person had a second nationality as a signal for investigation, and it had a disproportionate impact on immigrants.

The Dutch social benefits scandal might have been prevented or lessened had Dutch authorities produced an impact assessment for the system, as proposed by the AI Act, that could have raised red flags, says Skoric.

She argues that the law must have a clear explanation for why a model earns certain labels, for example when rapporteurs moved predictive policing from the high-risk category to a recommended ban.

Alexandru Circiumaru, European public policy lead at the independent research and human rights group the Ada Lovelace Institute, in the UK, agrees, saying the AI Act needs to better explain the methodology that leads to a type of AI system being recategorised from banned to high-risk or the other way around.

“Why are these systems included in those categories now, and why weren’t they included before? What’s the test?” he asks.

More clarity on those questions is also necessary to prevent the AI Act from quashing potentially empowering algorithms, says Sennay Ghebreab, founder and director of the Civic AI Lab at the University of Amsterdam.

Profiling can be punitive, as in the Dutch benefits scandal, and he supports a ban on predictive policing. But other algorithms can be helpful – for example, in helping resettle refugees by profiling people based on their background and skills.

A 2018 study published in Science calculated that a machine-learning algorithm could expand employment opportunities for refugees in the United States more than 40 percent and more than 70 percent in Switzerland, at little cost.

“I don’t believe we can build systems that are perfect,” he says. “But I do believe that we can continuously improve AI systems by looking at what went wrong and getting feedback from people and communities.”

Many of the thousands of suggested changes to the AI Act won’t be integrated into the final version of the law. But Petra Molnar of the Refugee Law Lab, who has suggested nearly two dozen changes, including banning systems like iBorderCtrl, says that it’s an important time to be clear about which forms of AI should be banned or deserve special care.

“This is a really important opportunity to think through what we want our world to look like, what we want our societies to be like, what it actually means to practice human rights in reality, not just on paper,” she says. “It’s about what we owe to each other, what kind of world we’re building, and who was excluded from these conversations.”

- A Wired report